The Interactive Era

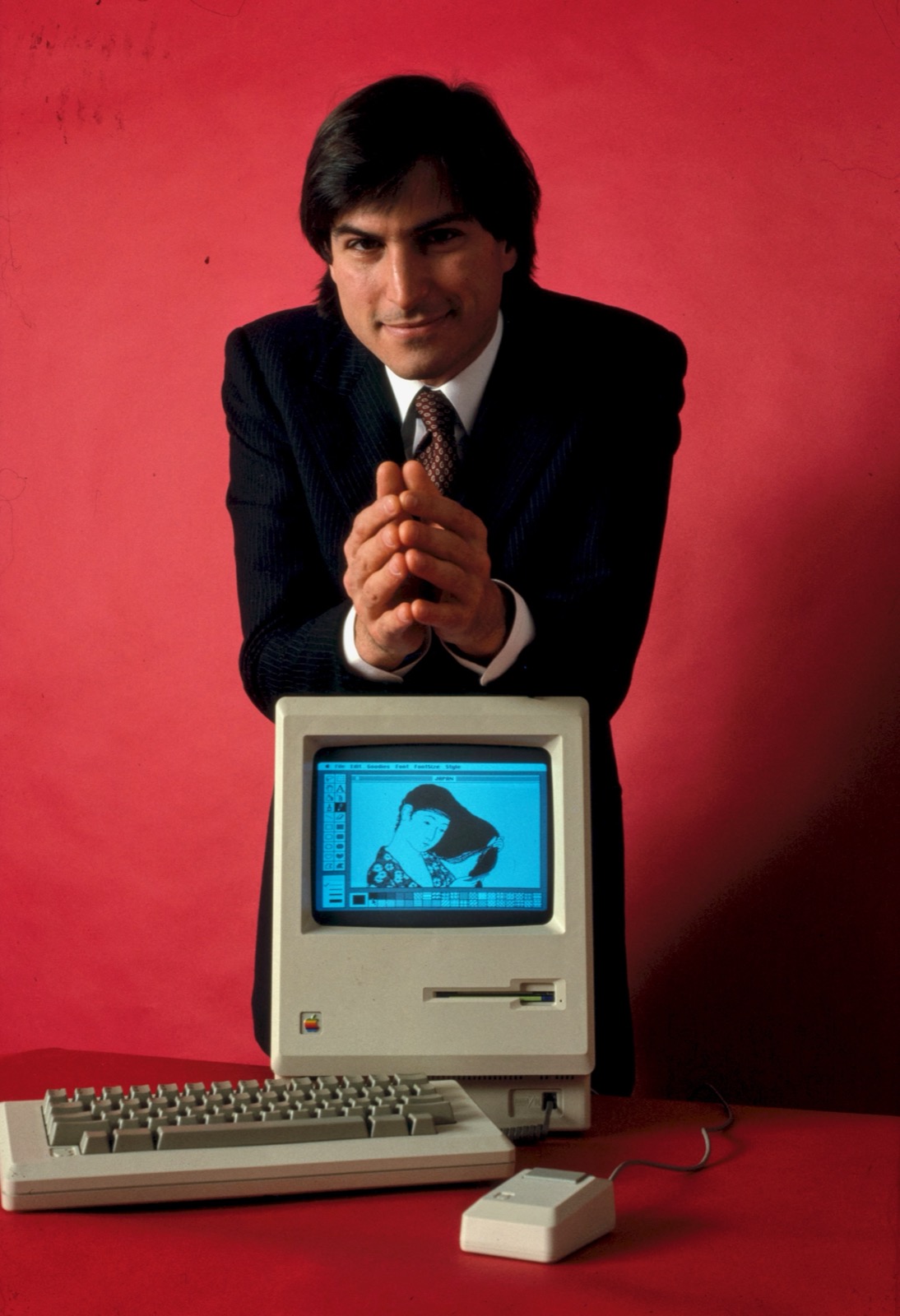

There's a photograph from 1984 of Steve Jobs introducing the Macintosh. He holds it up, and the room erupts in excitement, not because the Macintosh was smarter, but because it understood the way you think and want to work. You pointed at things. It responded. That was the GUI moment: technology that worked the way people do, not the other way around.

The terminal didn't disappear. Developers still live there. What the GUI did was expand who could participate without taking anything away from the people who already knew how to use the existing tools. That's what's happening with AI now.

Most AI today still meets you where it is: query, response, reset, repeat. Powerful, but still more like a series of one-shot exchanges than a real conversation.

But now the model is starting to stay in sync with you. There is audio that you can talk back to. Agents that hold context for hours. Sessions that remember where you've been.

At this point, output stops feeling like output. It starts to feel continuous. Video does the same thing, except it isn't producing a sentence. It's producing a world.

Video generation isn't flawless yet, but it's capable enough that the bottleneck has moved.

The constraint isn't intelligence per token anymore. It's tokens per second.

Today's best video models still feel transactional: start a generation, wait thirty seconds to two minutes, pay about twenty-five cents, get five seconds of footage.

But streaming generation, cached model state, and shorter denoising paths cut cost per second of footage by 100 to 500x. And when that happens, the shape of the product changes too.

- Frame-by-frame streaming

- Long generations that don't drift

- Interactive steering while the model is still running

- Continuous generation that keeps responding

Building for that requires the same kind of shift GUI computing once required. GUIs needed graphics cards, event loops, windowing systems, and infrastructure that didn't exist for batch computing.

Interactive generative AI needs its own compute layer too: inference that holds session state, runs continuously at real-time speeds, and keeps the model alive between turns.

Today, we're announcing exactly that: uRun. The inference cloud for the interactive era.

Holding session state at GPU speed through a million-user spike isn't a new problem for us.

Our engineering team includes Keegan McCallum, who scaled generative video inference across tens of thousands of GPUs at Luma, serving a million users in four days. Sean Kane has spent years shaping how engineers run production systems at scale: co-authoring Docker: Up & Running, building New Relic's original container platform, and leading invention work on container monitoring. Matt Krzus spent years at AWS compiling models down to edge hardware for computer vision workloads across AWS Neuron, Just Walk Out, and Panorama, before there was even a clean name for that category of work.

Generative models are already running on uRun today: video pipelines, avatar pipelines, and world models, each one holding session state and staying alive between turns.

LingBot-World Fast is one of them, a real-time interactive world model we brought up from freshly released weights in eleven days.

We're opening a waitlist and looking for early design partners: research teams with models ready to run interactively today, game studios folding real-time generation into production pipelines, and product teams replacing scripted interactions with AI-generated video avatars.

In 1984, the people in that audience didn't yet know what they'd build with a mouse. They just felt the distance between their goals and the machine's capabilities shrink. That's the kind of capability we want to put in your hands.

If you want to build this with us, join the waitlist.